Exact sampling¶

Consider a finite DPP defined by its correlation kernel \(\mathbf{K}\) (1) or likelihood kernel \(\mathbf{L}\) (2). There exist three main types of exact sampling procedures:

The spectral method (used by default) requires the eigendecomposition of the correlation kernel \(\mathbf{K}\) or the likelihood kernel \(\mathbf{L}\). It is based on the fact that generic DPPs are mixtures of projection DPPs together with the application of the chain rule to sample projection DPPs. It is presented in Section Spectral method.

A Cholesky-based procedure which requires the correlation kernel \(\mathbf{K}\) (even non-Hermitian!). It boils down to applying the chain rule on sets; where each item in turn is decided to be excluded or included in the sample. It is presented in Section Cholesky-based method.

Recently, [DerezinskiCV19] have also proposed an alternative method to get exact samples: first sample an intermediate distribution and correct the bias by thinning the intermediate sample using a carefully designed DPP. This approach does not require a Cholesky/Eigen-decomposition of the DPP, but the runtime instead scale with the expected sample size of the DPP (see Number of points). It is presented in Section Intermediate sampling method. A more refined procedure based on this approach was introduced in [CDerezinskiV20] for k-DPP sampling.

In general, for small \(N\) (i.e. less than 1000) spectral or cholesky samplers are recommended for numerical stability. For larger \(N\) (i.e. up to millions) and moderate \(k\) (i.e. in the hundreds) intermediate sampling is recommended for scalability.

The following table summarizes the complexity of all exact samplers currently available, where the expected sample size \(\mathbb{E}[|X|]\) is equal to \(k\) for k-DPPs and \(d_{\text{eff}}\) for random-sized DPPs.

|

Time to first sample |

Time to subsequent samples |

Notes |

|||

|---|---|---|---|---|---|---|

DPP |

k-DPP |

DPP |

k-DPP |

|||

Spectral sampler |

|

\(O(N^3)\) |

\(O(N^3)\) |

\(O(N d_{\text{eff}}^2)\) |

\(O(N k^2)\) |

The three variants differ slightly, and depending on the DPP they can have different numerical stability. |

Cholesky sampler |

|

\(O(N^3)\) |

\(O(N^3)\) |

\(O(N^3)\) |

\(O(N^3)\) |

Also works for non-Hermitian DPPs. |

Intermediate sampler |

|

\(O(N d_{\text{eff}}^6)\) |

\(O(N k^{10} + k^{15})\) |

\(O(d_{\text{eff}}^6)\) |

\(O(k^6)\) |

For |

|

\(O(N d_{\text{eff}}^5)\) |

\(O(N k^6/d_{\text{eff}} + k^9)\) |

\(O(d_{\text{eff}}^6)\) |

\(O(k^6)\) |

||

Note

There exist specific samplers for special DPPs, like the ones presented in Section Exotic DPPs.

Important

In the next section, we describe the Algorithm 18 of [HKPVirag06], based on the chain rule, which was originally designed to sample continuous projection DPPs. Obviously, it has found natural a application in the finite setting for sampling projection \(\operatorname{DPP}(\mathbf{K})\). However, we insist on the fact that this chain rule mechanism is specific to orthogonal projection kernels. In particular, it cannot be applied blindly to sample general \(k\!\operatorname{-DPP}(\mathbf{L})\) but it is valid when \(\mathbf{L}\) is an orthogonal projection kernel.

This crucial point is developed in the following Caution section.

Projection DPPs: the chain rule¶

In this section, we describe the generic projection DPP sampler, originally derived by [HKPVirag06] Algorithm 18.

Recall that the number of points of a projection \(r=\operatorname{DPP}(\mathbf{K})\) is, almost surely, equal to \(\operatorname{rank}(K)\), so that the likelihood (13) of \(S=\{s_1, \dots, s_r\}\) reads

Using the invariance by permutation of the determinant and the fact that \(\mathbf{K}\) is an orthogonal projection matrix, it is sufficient to apply the chain rule to sample \((s_1, \dots, s_r)\) with joint distribution

and forget about the sequential feature of the chain rule to get a valid sample \(\{s_1, \dots, s_r\} \sim \operatorname{DPP}(\mathbf{K})\).

Considering \(S=\{s_1, \dots, s_r\}\) such that \(\mathbb{P}[\mathcal{X}=S] = \det \mathbf{K}_S > 0\), the following generic formulation of the chain rule

can be expressed as a telescopic ratio of determinants

where \(S_{i-1} = \{s_{1}, \dots, s_{i-1}\}\).

Using Woodbury’s formula the ratios of determinants in (17) can be expanded into

Hint

MLers will recognize in (18) the incremental posterior variance of the Gaussian Process (GP) associated to \(\mathbf{K}\), see [RW06] Equation 2.26.

See also

Algorithm 18 [HKPVirag06]

Projection DPPs: the chain rule in the continuous case

Geometrical interpretation¶

Hint

Since \(\mathbf{K}\) is an orthogonal projection matrix, the following Gram factorizations provide an insightful geometrical interpretation of the chain rule mechanism (17):

1. Using \(\mathbf{K} = \mathbf{K}^2\) and \(\mathbf{K}^{\dagger}=\mathbf{K}\), we have \(\mathbf{K} = \mathbf{K} \mathbf{K}^{\dagger}\), so that the chain rule (17) becomes

Using the eigendecomposition, we can write \(\mathbf{K} = U U^{\dagger}\) where \(U^{\dagger} U = I_r\), so that the chain rule (17) becomes

In other words, the chain rule formulated as (19) and (20) is akin to do Gram-Schmidt orthogonalization of the feature vectors \(\mathbf{K}_{i,:}\) or \(\mathbf{U}_{i,:}\). These formulations can also be understood as an application of the base \(\times\) height formula. In the end, projection DPPs favors sets of \(r=\operatorname{rank}(\mathbf{K})\) of items are associated to feature vectors that span large volumes. This is another way of understanding diversity.

In practice¶

The cost of getting one sample from a projection DPP is of order \(\mathcal{O}(N\operatorname{rank}(\mathbf{K})^2)\), whenever \(\operatorname{DPP}(\mathbf{K})\) is defined through

\(\mathbf{K}\) itself; sampling relies on formulations (19) or (18)

import numpy as np from scipy.linalg import qr from dppy.finite_dpps import FiniteDPP seed = 0 rng = np.random.RandomState(seed) r, N = 4, 10 eig_vals = np.ones(r) # For projection DPP eig_vecs, _ = qr(rng.randn(N, r), mode='economic') DPP = FiniteDPP(kernel_type='correlation', projection=True, **{'K': (eig_vecs * eig_vals).dot(eig_vecs.T)}) for mode in ('GS', 'Schur', 'Chol'): # default: GS rng = np.random.RandomState(seed) DPP.flush_samples() for _ in range(10): DPP.sample_exact(mode=mode, random_state=rng) print(DPP.sampling_mode) print(DPP.list_of_samples)

GS [[5, 7, 2, 1], [4, 6, 2, 9], [9, 2, 6, 4], [5, 9, 0, 1], [0, 8, 6, 7], [9, 6, 2, 7], [0, 6, 2, 9], [5, 2, 1, 8], [5, 4, 0, 8], [5, 6, 9, 1]] Schur [[5, 7, 2, 1], [4, 6, 2, 9], [9, 2, 6, 4], [5, 9, 0, 1], [0, 8, 6, 7], [9, 6, 2, 7], [0, 6, 2, 9], [5, 2, 1, 8], [5, 4, 0, 8], [5, 6, 9, 1]] Chol [[5, 7, 6, 0], [4, 6, 5, 7], [9, 5, 0, 1], [5, 9, 2, 4], [0, 8, 1, 7], [9, 0, 5, 1], [0, 6, 5, 9], [5, 0, 1, 9], [5, 0, 2, 8], [5, 6, 9, 1]]

See also

[HKPVirag06] Theorem 7, Algorithm 18 and Proposition 19, for the original idea

[Pou19] Algorithm 3, for the equivalent Cholesky-based perspective with cost of order \(\mathcal{O}(N \operatorname{rank}(\mathbf{K})^2)\)

its eigenvectors \(U\), i.e., \(\mathbf{K}=U U^{\dagger}\) with \(U^{\dagger}U = I_{\operatorname{rank}(\mathbf{K})}\); sampling relies on (20)

import numpy as np from scipy.linalg import qr from dppy.finite_dpps import FiniteDPP seed = 0 rng = np.random.RandomState(seed) r, N = 4, 10 eig_vals = np.ones(r) # For projection DPP eig_vecs, _ = qr(rng.randn(N, r), mode='economic') DPP = FiniteDPP(kernel_type='correlation', projection=True, **{'K_eig_dec': (eig_vals, eig_vecs)}) rng = np.random.RandomState(seed) for _ in range(10): # mode='GS': Gram-Schmidt (default) DPP.sample_exact(mode='GS', random_state=rng) print(DPP.list_of_samples)

[[5, 7, 2, 1], [4, 6, 2, 9], [9, 2, 6, 4], [5, 9, 0, 1], [0, 8, 6, 7], [9, 6, 2, 7], [0, 6, 2, 9], [5, 2, 1, 8], [5, 4, 0, 8], [5, 6, 9, 1]]

See also

[HKPVirag06] Theorem 7, Algorithm 18 and Proposition 19, for the original idea

[KT12] Algorithm 1, for a first interpretation of the spectral counterpart of [HKPVirag06] Algorithm 18 running in \(\mathcal{O}(N \operatorname{rank}(\mathbf{K})^3)\)

[Gil14] Algorithm 2, for the \(\mathcal{O}(N \operatorname{rank}(\mathbf{K})^2)\) implementation

[TBA18] Algorithm 3, for a technical report on DPP sampling

Spectral method¶

Main idea¶

The procedure stems from Theorem 7 of [HKPVirag06], i.e., the fact that generic DPPs are mixtures of projection DPPs, suggesting the following two steps algorithm. Given the spectral decomposition of the correlation kernel \(\mathbf{K}\)

Step 1. Draw independent Bernoulli random variables \(B_n \sim \operatorname{\mathcal{B}er}(\lambda_n)\) for \(n=1,\dots, N\) and collect \(\mathcal{B}=\left\{ n ~;~ B_n=1 \right\}\),

Step 2. Sample from the projection DPP with correlation kernel \(U_{:\mathcal{B}} {U_{:\mathcal{B}}}^{\dagger} = \sum_{n\in \mathcal{B}} u_n u_n^{\dagger}\), see the section above.

Note

Step 1. selects a component of the mixture while Step 2. requires sampling from the corresponding projection DPP, cf. Projection DPPs: the chain rule

In practice¶

Sampling projection \(\operatorname{DPP}(\mathbf{K})\) from the eigendecomposition of \(\mathbf{K}=U U^{\dagger}\) with \(U^{\dagger}U = I_{\operatorname{rank}(\mathbf{K})}\)) was presented in the section above

Sampling \(\operatorname{DPP}(\mathbf{K})\) from \(0_N \preceq\mathbf{K} \preceq I_N\) can be done by following

Step 0. compute the eigendecomposition of \(\mathbf{K} = U \Lambda U^{\dagger}\) in \(\mathcal{O}(N^3)\).

Step 1. draw independent Bernoulli random variables \(B_n \sim \operatorname{\mathcal{B}er}(\lambda_n)\) for \(n=1,\dots, N\) and collect \(\mathcal{B}=\left\{ n ~;~ B_n=1 \right\}\)

Step 2. sample from the projection DPP with correlation kernel defined by its eigenvectors \(U_{:, \mathcal{B}}\)

Important

Step 0. must be performed once and for all in \(\mathcal{O}(N^3)\). Then the average cost of getting one sample by applying Steps 1. and 2. is \(\mathcal{O}(N \mathbb{E}\left[|\mathcal{X}|\right]^2)\), where \(\mathbb{E}\left[|\mathcal{X}|\right]=\operatorname{trace}(\mathbf{K})=\sum_{n=1}^{N} \lambda_n\).

from numpy.random import RandomState from scipy.linalg import qr from dppy.finite_dpps import FiniteDPP rng = RandomState(0) r, N = 4, 10 eig_vals = rng.rand(r) # For projection DPP eig_vecs, _ = qr(rng.randn(N, r), mode='economic') DPP = FiniteDPP(kernel_type='correlation', projection=False, **{'K': (eig_vecs*eig_vals).dot(eig_vecs.T)}) for _ in range(10): # mode='GS': Gram-Schmidt (default) DPP.sample_exact(mode='GS', random_state=rng) print(DPP.list_of_samples)

[[7, 0, 1, 4], [6], [0, 9], [0, 9], [8, 5], [9], [6, 5, 9], [9], [3, 0], [5, 1, 6]]

Sampling \(\operatorname{DPP}(\mathbf{L})\) from \(\mathbf{L} \succeq 0_N\) can be done by following

Step 0. compute the eigendecomposition of \(\mathbf{L} = V \Gamma V^{\dagger}\) in \(\mathcal{O}(N^3)\).

Step 1. is adapted to: draw independent Bernoulli random variables \(B_n \sim \operatorname{\mathcal{B}er}(\frac{\gamma_n}{1+\gamma_n})\) for \(n=1,\dots, N\) and collect \(\mathcal{B}=\left\{ n ~;~ B_n=1 \right\}\)

Step 2. is adapted to: sample from the projection DPP with correlation kernel defined by its eigenvectors \(V_{:,\mathcal{B}}\).

Important

Step 0. must be performed once and for all in \(\mathcal{O}(N^3)\). Then the average cost of getting one sample by applying Steps 1. and 2. is \(\mathcal{O}(N \mathbb{E}\left[|\mathcal{X}|\right]^2)\), where \(\mathbb{E}\left[|\mathcal{X}|\right]=\operatorname{trace}(\mathbf{L(I+L)^{-1}})=\sum_{n=1}^{N} \frac{\gamma_n}{1+\gamma_n}\)

from numpy.random import RandomState from scipy.linalg import qr from dppy.finite_dpps import FiniteDPP rng = RandomState(0) r, N = 4, 10 phi = rng.randn(r, N) DPP = FiniteDPP(kernel_type='likelihood', projection=False, **{'L': phi.T.dot(phi)}) for _ in range(10): # mode='GS': Gram-Schmidt (default) DPP.sample_exact(mode='GS', random_state=rng) print(DPP.list_of_samples)

[[3, 1, 0, 4], [9, 6], [4, 1, 3, 0], [7, 0, 6, 4], [5, 0, 7], [4, 0, 2], [5, 3, 8, 4], [0, 5, 2], [7, 0, 2], [6, 0, 3]]

Sampling a \(\operatorname{DPP}(\mathbf{L})\) for which each item is represented by a \(d\leq N\) dimensional feature vector, all stored in a feature matrix \(\Phi \in \mathbb{R}^{d\times N}\), so that \(\mathbf{L}=\Phi^{\top} \Phi \succeq 0_N\), can be done by following

Step 0. compute the so-called dual kernel \(\tilde{L}=\Phi \Phi^{\dagger}\in \mathbb{R}^{d\times}\), eigendecompose it \(\tilde{\mathbf{L}} = W \Delta W^{\top}\) and recover the eigenvectors of \(\mathbf{L}\) as \(V=\Phi^{\top}W \Delta^{-\frac{1}{2}}\) This corresponds to a cost of order \(\mathcal{O}(Nd^2 + d^3 + d^2 + Nd^2)\).

Step 1. is adapted to: draw independent Bernoulli random variables \(B_i \sim \operatorname{\mathcal{B}er}(\frac{\delta_i}{1+\delta_i})\) for \(i=1,\dots, d\) and collect \(\mathcal{B}=\left\{ i ~;~ B_i=1 \right\}\)

Step 2. is adapted to: sample from the projection DPP with correlation kernel defined by its eigenvectors \(V_{:,\mathcal{B}} = \left[\Phi^{\top} W \Delta^{-1/2}\right]_{:,\mathcal{B}}\).

Important

Step 0. must be performed once and for all in \(\mathcal{O}(Nd^2 + d^3)\). Then the average cost of getting one sample by applying Steps 1. and 2. is \(\mathcal{O}(N \mathbb{E}\left[|\mathcal{X}|\right]^2)\), where \(\mathbb{E}\left[|\mathcal{X}|\right]=\operatorname{trace}(\mathbf{\tilde{L}(I+\tilde{L})^{-1}})=\sum_{i=1}^{d} \frac{\delta_i}{1+\delta_i}\leq d\)

See also

For a different perspective see

from numpy.random import RandomState from scipy.linalg import qr from dppy.finite_dpps import FiniteDPP rng = RandomState(0) r, N = 4, 10 phi = rng.randn(r, N) # L = phi.T phi, L_dual = phi phi.T DPP = FiniteDPP(kernel_type='likelihood', projection=False, **{'L_gram_factor': phi}) for _ in range(10): # mode='GS': Gram-Schmidt (default) DPP.sample_exact(mode='GS', random_state=rng) print(DPP.list_of_samples)

L_dual = Phi Phi.T was computed: Phi (dxN) with d<N [[9, 0, 2, 3], [0, 1, 5, 2], [7, 0, 9, 4], [2, 0, 3], [6, 4, 0, 3], [5, 0, 6, 3], [0, 6, 3, 9], [4, 0, 9], [7, 3, 9, 4], [9, 4, 3]]

Cholesky-based method¶

Main idea¶

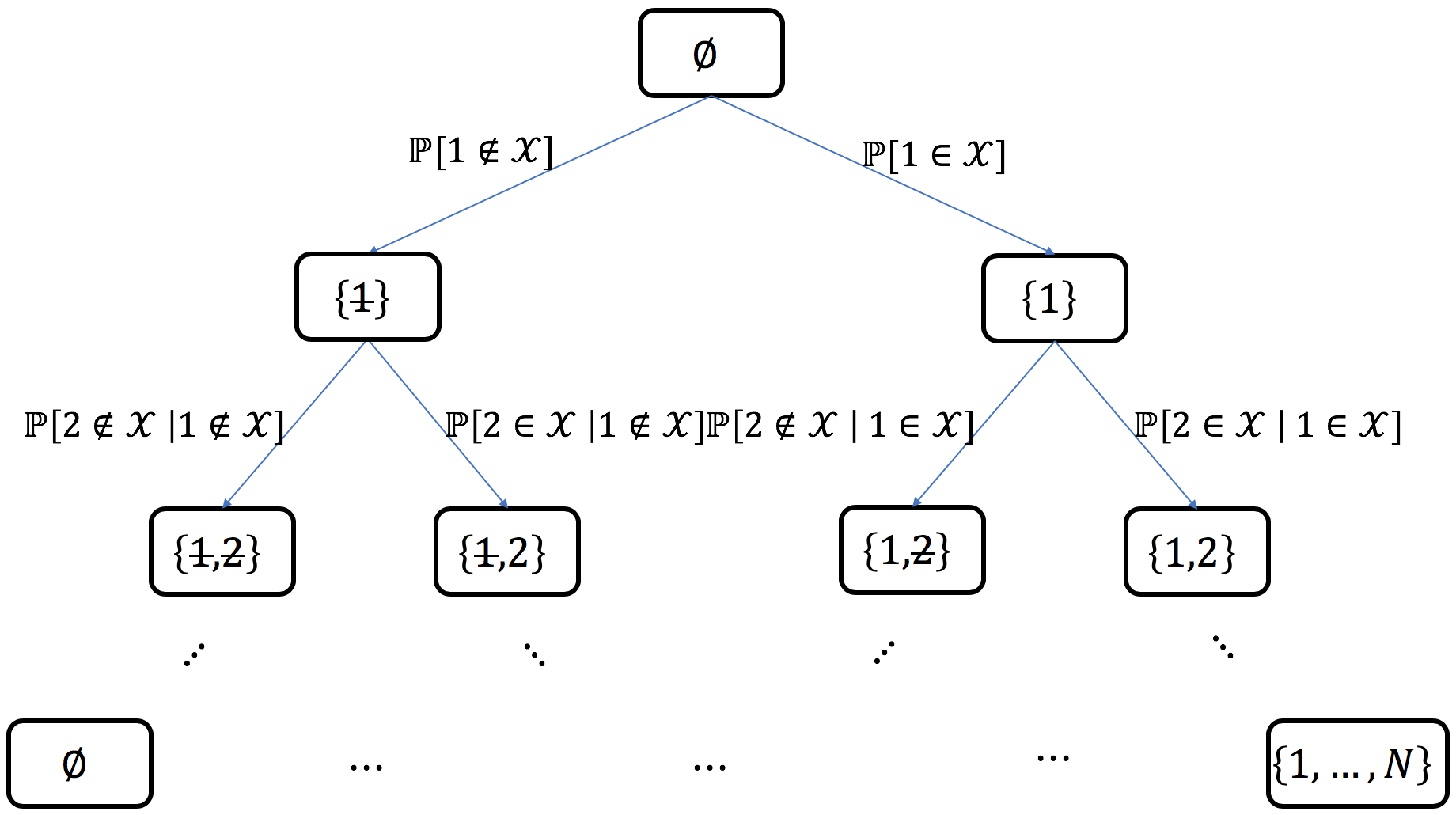

This method requires access to the correlation kernel \(\mathbf{K}\) to perform a bottom-up chain rule on sets: starting from the empty set, each item in turn is decided to be added or excluded from the sample. This can be summarized as the exploration of the binary probability tree displayed in Fig. 7.

Fig. 7 Probability tree corresponding to the chain rule on sets¶

Example: for \(N=5\), if \(\left\{ 1, 4 \right\}\) was sampled, the path in the probability tree would correspond to

where each conditional probability can be written in closed formed using (15) and (16), namely

Important

This quantities can be computed efficiently as they appear in the computation of the Cholesky-type \(LDL^{\dagger}\) or \(LU\) factorization of the correlation \(\mathbf{K}\) kernel, in the Hermitian or non-Hermitian case, respectively. See [Pou19] for the details.

Note

The sparsity of \(\mathbf{K}\) can be leveraged to derive faster samplers using the correspondence between the chain rule on sets and Cholesky-type factorizations, see e.g., [Pou19] Section 4.

In practice¶

Important

The method is fully generic since it applies to any (valid), even non-Hermitian, correlation kernel \(\mathbf{K}\).

Each sample costs \(\mathcal{O}(N^3)\).

Nevertheless, the link between the chain rule on sets and Cholesky-type factorization is nicely supported by the surprisingly simple implementation of the corresponding generic sampler.

# Poulson (2019, Algorithm 1) pseudo-code

sample = []

A = K.copy()

for j in range(N):

if np.random.rand() < A[j, j]: # Bernoulli(A_jj)

sample.append(j)

else:

A[j, j] -= 1

A[j+1:, j] /= A[j, j]

A[j+1:, j+1:] -= np.outer(A[j+1:, j], A[j, j+1:])

return sample, A

from numpy.random import RandomState

from scipy.linalg import qr

from dppy.finite_dpps import FiniteDPP

rng = RandomState(1)

r, N = 4, 10

eig_vals = rng.rand(r)

eig_vecs, _ = qr(rng.randn(N, r), mode='economic')

DPP = FiniteDPP(kernel_type='correlation',

projection=False,

**{'K': (eig_vecs*eig_vals).dot(eig_vecs.T)})

for _ in range(10):

DPP.sample_exact(mode='Chol', random_state=rng)

print(DPP.list_of_samples)

[[2, 9], [0], [2], [6], [4, 9], [2, 7, 9], [0], [1, 9], [0, 1, 2], [2]]

Intermediate sampling method¶

Main idea¶

This method is based on the concept of a distortion-free intermediate sample, where we draw a larger sample of points in such a way that we can then downsample to the correct DPP distribution. We assume access to the likelihood kernel \(\mathbf{L}\) (although a variant of this method also exists for projection DPPs). Crucially the sampling relies on an important connection between DPPs and so-called ridge leverage scores (RLS, see [AM15]), which are commonly used for sampling in randomized linear algebra. Namely, the marginal probability of the i-th point in \(\mathcal{X} \sim \operatorname{DPP}(\mathbf{L})\) is also the i-th ridge leverage score of \(\mathbf{L}\) (with ridge parameter equal 1):

Suppose that we draw a sample \(\sigma\) of \(t\) points i.i.d. proportional to ridge leverage scores, i.e., \(\sigma=(\sigma_1, \sigma_2,...,\sigma_t)\) such that \(\mathbb{P}[\sigma_j=i]\propto\tau_i\). Intuitively, this sample is similar fo \(\mathcal{X}\sim \operatorname{DPP}(\mathbf{L})\) because the marginals are the same, but it “ignores” all the dependencies between the points. However, if we sample sufficiently many points i.i.d. according to RLS, then a proper sample \(\mathcal{X}\) will likely be contained within \(\sigma\). This can be formally shown for \(t = O(\mathbb{E}[|\mathcal{X}|]^2)\). When \(\mathbb{E}[|\mathcal{X}|]^2\ll N\), then this allows us to reduce the size of the DPP kernel \(\mathbf{L}\) from \(N\times N\) to a much smaller size \(\mathbf{\tilde{L}}\) \(t\times t\). Making this sampling exact requires considerably more care, because even with a large \(t\) there is always a small probability that the i.i.d. sample \(\sigma\) is not sufficiently diverse. We guard against this possibility by rejection sampling.

Important

Use this method for sampling \(\mathcal{X} \sim \operatorname{DPP}(\mathbf{L})\) when \(\mathbb{E}\left[|\mathcal{X}|\right]\ll\sqrt{N}\).

Preprocessing costs \(\mathcal{O}\big(N\cdot \text{poly}(\mathbb{E}\left[|\mathcal{X}|\right])\, \text{polylog}(N)\big)\).

Each sample costs \(\mathcal{O}\big(\mathbb{E}[|\mathcal{X}|]^6\big)\).

There are two implementations of intermediate sampling available in dppy: the mode='vfx' sampler and the mode='alpha' sampler.

In practice¶

from numpy.random import RandomState

from dppy.finite_dpps import FiniteDPP

from dppy.utils import example_eval_L_linear

rng = RandomState(1)

r, N = 4, 10

DPP = FiniteDPP('likelihood',

**{'L_eval_X_data': (example_eval_L_linear, rng.randn(N, r))})

for _ in range(10):

DPP.sample_exact(mode='vfx', random_state=rng, verbose=False)

print(DPP.list_of_samples)

[[5, 1, 0, 3], [9, 0, 8, 3], [6, 4, 1], [5, 1, 2], [2, 1, 3], [3, 8, 4, 0], [0, 8, 1], [7, 8], [1, 8, 2, 0], [5, 8, 3]]

The verbose=False flag is used to suppress the default progress bars when running in batch mode (e.g. when generating these docs).

Given, the RLS \(\tau_1,\dots,\tau_N\), the normalization constant \(\det(I+\tilde{\mathbf L}_\sigma)\) and access to the likelihood kernel \(\tilde{\mathbf L}_\sigma\), the intermediate sampling method proceeds as follows:

It can be shown that \(\mathcal{X}\) is distributed exactly according to \(\operatorname{DPP}(\mathbf{L})\) and the expected number of rejections is a small constant. The intermediate likelihood kernel \(\tilde{\mathbf L}_\sigma\) forms a \(t\times t\) DPP subproblem that can be solved using any other DPP sampler.

Since the size of the intermediate sample is \(t=\mathcal{O}(\mathbb{E}[\mathcal{X}]^2)\), the primary cost of the sampling is computing \(\det(I+\tilde{\mathbf L}_\sigma)\) which takes \(\mathcal{O}(t^3)=\mathcal{O}(\mathbb{E}[\mathcal{X}]^6)\) time. This is also the expected cost of sampling from \(\operatorname{DPP}(\tilde{\mathbf{L}}_{\sigma})\) if we use, for example, the spectral method.

The algorithm requires precomputing the RLS \(\tau_1,\dots,\tau_n\) and \(\det(I+\mathbf L)\). Computing them exactly takes \(\mathcal{O}(N^3)\), however, surprisingly, if we use sufficiently accurate approximations then the exactness of the sampling can be retained (details in [DerezinskiCV19]). Efficient methods for approximating leverage scores (see [RCCR18]) bring the precomputing cost down to \(\mathcal{O}(N \text{poly}(\mathbb{E}\left[|\mathcal{X}|\right]) \text{polylog}(N))\).

When \(\mathbb{E}[|\mathcal{X}|]\) is sufficiently small, the entire sampling procedure only looks at a small fraction of the entries of \(\mathbf{L}\). This makes the method useful when we want to avoid constructing the entire likelihood kernel.

When the likelihood kernel is given implicitly via a matrix \(\mathbf{X}\) such that \(\mathbf{L}=\mathbf{X}\mathbf{X}^\top\) (dual formulation) then a version of this method is given by [Derezinski19]

A variant of this method also exists for projection DPPs [DWH18]

See also

[DerezinskiCV19] (Likelihood kernel)

[Derezinski19] (Dual formulation)

[DWH18] (Projection DPP)

\(k\)-DPPs¶

Main idea¶

Recall from (5) that \(k\!\operatorname{-DPP}(\mathbf{L})\) can be viewed as a \(\operatorname{DPP}(\mathbf{L})\) constrained to a have fixed cardinality \(k \leq \operatorname{rank}(L)\).

To generate a sample of \(k\!\operatorname{-DPP}(\mathbf{L})\), one natural solution would be to use a rejection mechanism: draw \(S \sim \operatorname{DPP}(\mathbf{L})\) and keep it only if \(|X| = k\). However, the rejection constant may be pretty bad depending on the choice of \(k\) regarding the distribution of the number of points (9) of \(S \sim \operatorname{DPP}(\mathbf{L})\).

An alternative solution was found by [KT12] Section 5.2.2. The procedure relies on a slight modification of Step 1. of the Spectral method which requires the computation of the elementary symmetric polynomials.

In practice¶

Sampling \(k\!\operatorname{-DPP}(\mathbf{L})\) from \(\mathbf{L} \succeq 0_N\) can be done by following

Step 0.

compute the eigendecomposition of \(\mathbf{L} = V \Gamma V^{\dagger}\) in \(\mathcal{O}(N^3)\)

evaluate the elementary symmetric polynomials in the eigenvalues of \(\mathbf{L}\): \(E[l, n]:=e_l(\gamma_1, \dots, \gamma_n)\) for \(0\leq l\leq k\) and \(0\leq n\leq N\). These computations can done recursively in \(\mathcal{O}(N k)\) using Algorithm 7 of [KT12].

Step 1. is replaced by Algorithm 8 of [KT12] which we illustrate with the following pseudo-code

# Algorithm 8 of Kulesza Taskar (2012). # This is a pseudo-code of in particular Python indexing is not respected everywhere B = set({}) l = k for n in range(N, 0, -1): if Unif(0,1) < gamma[n] * E[l-1, n-1] / E[l, n]: l -= 1 B.union({n}) if l == 0: breakStep 2. is adapted to: sample from the projection DPP with correlation kernel defined by its eigenvectors \(V_{:,\mathcal{B}}\), with a cost of order \(\mathcal{O}(N k^2)\).

Important

Step 0. must be performed once and for all in \(\mathcal{O}(N^3 + Nk)\). Then the cost of getting one sample by applying Steps 1. and 2. is \(\mathcal{O}(N k^2)\).

import numpy as np

from dppy.finite_dpps import FiniteDPP

rng = np.random.RandomState(1)

r, N = 5, 10

# Random feature vectors

Phi = rng.randn(r, N)

DPP = FiniteDPP('likelihood', **{'L': Phi.T.dot(Phi)})

k = 4

for _ in range(10):

DPP.sample_exact_k_dpp(size=k, random_state=rng)

print(DPP.list_of_samples)

[[1, 8, 5, 7], [3, 8, 5, 9], [5, 3, 1, 8], [5, 8, 2, 9], [1, 2, 9, 6], [1, 0, 2, 3], [7, 0, 3, 5], [8, 3, 7, 6], [0, 2, 3, 7], [1, 3, 7, 5]]

Caution¶

Attention

Since the number of points of \(k\!\operatorname{-DPP}(\mathbf{L})\) is fixed, like for projection DPPs, it might be tempting to sample \(k\!\operatorname{-DPP}(\mathbf{L})\) using a chain rule in the way it was applied in (18) to sample projection DPPs. However, it is incorrect: sampling sequentially

where \(S_{i-1} = \{s_{1}, \dots, s_{i-1}\}\), and forgetting about the order \(s_{1}, \dots, s_{k}\) were selected does not provide a subset \(\{s_{1}, \dots, s_{k}\} \sim k\!\operatorname{-DPP}(\mathbf{L})\), in the general case. Nevertheless, it is valid when \(\mathbf{L}\) is an orthogonal projection kernel!

Here are the reasons why

First keep in mind that, the ultimate goal is to draw a subset \(S=\{ s_{1}, \dots, s_{k} \} \sim k\!\operatorname{-DPP}(\mathbf{L})\) with probability (5)

Now, if we were to use the chain rule (21) this would correspond to sampling sequentially the items \(s_1, \dots, s_{k}\), so that the resulting vector \((s_{1}, \dots, s_{k})\) has probability

Contrary to \(Z_1=\operatorname{trace}(\mathbf{L})\), the normalizations \(Z_i(s_{1}, \dots, s_{i-1})\) of the successive conditionals depend, a priori, on the order \(s_{1}, \dots, s_{k}\) were selected. For this reason we denote the global normalization constant \(Z(s_{1}, \dots, s_{k})\).

Warning

Equation (23) suggests that, the sequential feature of the chain rule matters, a priori; the distribution of \(\left(s_{1}, \dots, s_{k} \right)\) is not exchangeable a priori, i.e., it is not invariant to permutations of its coordinates. This fact, would only come from the normalization \(Z(s_{1}, \dots, s_{k})\), since \(\mathbf{L}_S\) is invariant by permutation.

Note

To see this, let’s compute the normalization constant \(Z_i(s_1, \dots, s_{i-1})\) in (23) for a generic \(\mathbf{L}\succeq 0_N\) factored as \(\mathbf{L} = VV^{\dagger}\), with no specific assumption on \(V\).

where \(\Pi_{V_{S_{i-1}, :}}\) denotes the orthogonal projection onto \(\operatorname{Span}\{V_{s_1,:}, \dots, V_{s_i-1, :}\}\), the supspace spanned the feature vectors associated to \(s_{1}, \dots, s_{i-1}\).

Then, summing (23) over the \(k!\) permutations of \(1, \dots, k\), yields the probability of drawing the subset \(S=\left\{ s_{1}, \dots, s_{k} \right\}\), namely

For the chain rule (23) to be a valid procedure for sampling \(k\!\operatorname{-DPP}(\mathbf{L})\), we must be able to identify (22) and (25), i.e., \(\mathbb{Q}[S] = \mathbb{P}[S]\) for all \(|S|=k\), or equivalently \(Z_S = e_k(L)\) for all \(|S|=k\).

Important

A sufficient condition (very likely to be necessary) is that the joint distribution of \((s_{1}, \dots, s_{k})\), generated by the chain rule mechanism (23) is exchangeable (invariant to permutations of the coordinates). In that case, the normalization in (23) would then be constant \(Z(s_{1}, \dots, s_{k})=Z\) . So that \(Z_S\) would in fact play the role of the normalization constant of (25), since it would be constant as well and equal to \(Z_S = \frac{Z}{k!}\). Finally, \(Z_S = e_k(L)\) by identification of (22) and (25).

This is what we can prove in the particular case where \(\mathbf{L}\) is an orthogonal projection matrix.

To do this, denote \(r=\operatorname{rank}(\mathbf{L})\) and recall that in this case \(\mathbf{L}\) satisfies \(\mathbf{L}^2=\mathbf{L}\) and \(\mathbf{L}^{\dagger}=\mathbf{L}\), so that it can be factored as \(\mathbf{L}=\Pi_{\mathbf{L}}=\mathbf{L}^{\dagger}\mathbf{L}=\mathbf{L}\mathbf{L}^{\dagger}\)

Finally, we can plug \(V=\mathbf{L}\) in (24) to obtain

Thus, the normalization \(Z(s_1, \dots, s_k)\) in (24) is constant as well equal to

where the last equality is a simple computation of the elementary symmetric polynomial

Important

This shows that, when \(\mathbf{L}\) is an orthogonal projection matrix, the order the items \(s_1, \dots, s_r\) were selected by the chain rule (23) can be forgotten, so that \(\{s_1, \dots, s_r\}\) can be considered as valid sample of \(k\!\operatorname{-DPP}(\mathbf{L})\).

# For our toy example, this sub-optimized implementation is enough

# to illustrate that the chain rule applied to sample k-DPP(L)

# draws s_1, ..., s_k sequentially, with joint probability

# P[(s_1, ..., s_k)] = det L_S / Z(s_1, ..., s_k)

#

# 1. is exchangeable when L is an orthogonal projection matrix

# P[(s1, s2)] = P[(s_2, s_1)]

# 2. is a priori NOT exchangeable for a generic L >= 0

# P[(s1, s2)] /= P[(s_2, s_1)]

import numpy as np

import scipy.linalg as LA

from itertools import combinations, permutations

k, N = 2, 4

potential_samples = list(combinations(range(N), k))

rank_L = 3

rng = np.random.RandomState(1)

eig_vecs, _ = LA.qr(rng.randn(N, rank_L), mode='economic')

for projection in [True, False]:

eig_vals = 1.0 + (0.0 if projection else 2 * rng.rand(rank_L))

L = (eig_vecs * eig_vals).dot(eig_vecs.T)

proba = np.zeros((N, N))

Z_1 = np.trace(L)

for S in potential_samples:

for s in permutations(S):

proba[s] = LA.det(L[np.ix_(s, s)])

Z_2_s0 = np.trace(L - L[:, s[:1]].dot(LA.inv(L[np.ix_(s[:1], s[:1])])).dot(L[s[:1], :]))

proba[s] /= Z_1 * Z_2_s0

print('L is {}projection'.format('' if projection else 'NOT '))

print('P[s0, s1]', proba, sep='\n')

print('P[s0]', proba.sum(axis=0), sep='\n')

print('P[s1]', proba.sum(axis=1), sep='\n')

print(proba.sum(), '\n' if projection else '')

L is projection

P[s0, s1]

[[0. 0.09085976 0.01298634 0.10338529]

[0.09085976 0. 0.06328138 0.15368033]

[0.01298634 0.06328138 0. 0.07580691]

[0.10338529 0.15368033 0.07580691 0. ]]

P[s0]

[0.20723139 0.30782147 0.15207463 0.33287252]

P[s1]

[0.20723139 0.30782147 0.15207463 0.33287252]

1.0000000000000002

L is NOT projection

P[s0, s1]

[[0. 0.09986722 0.01463696 0.08942385]

[0.11660371 0. 0.08062998 0.20535251]

[0.01222959 0.05769901 0. 0.04170435]

[0.07995922 0.15726273 0.04463087 0. ]]

P[s0]

[0.20879253 0.31482896 0.13989781 0.33648071]

P[s1]

[0.20392803 0.4025862 0.11163295 0.28185282]

1.0